The Science

Behind Glider AI

Hiring and workforce decisions should be evidence-based. Glider AI skills validation capabilities were built on industrial-organizational psychology, psychometric science, and applied performance analytics.

Every assessment, interview, and integrity check is engineered to measure what actually predicts success on the job. We do not guess. We measure.

Download the Reliability & Validity Handbook

Download Validity Handbook

Structure + Science

Improves outcomes.

Intuition Does Not Scale.

The cost of a bad hire is measurable. Research shows it can exceed 1.4x annual salary. Unstructured hiring methods create:

The Glider AI Difference

Built on Academic Best Practices and Practical, Real-World Application.

Our CEO’s prior venture established early frameworks in skill simulation and assessment science. Glider AI extends that foundation into a unified Skills Validation Platform that evaluates:

Structured. Validated. Continuously Calibrated.

Role & Skill Deconstruction

We begin by mapping the role to measurable competencies and outcomes. Every assessment and interview is aligned to defined performance criteria — not generic skill lists. Clear job definitions reduce bias and strengthen validity.

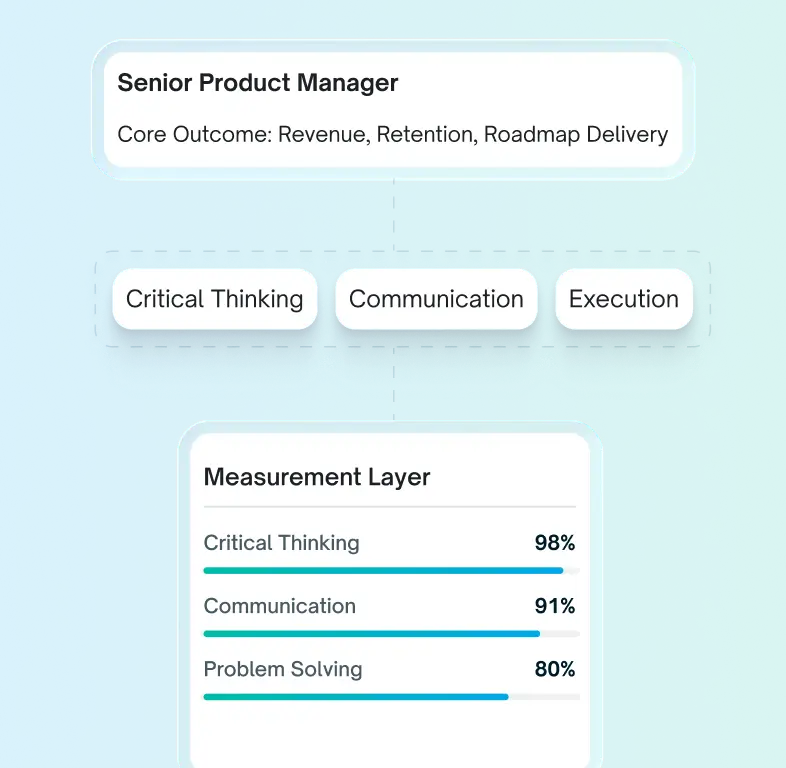

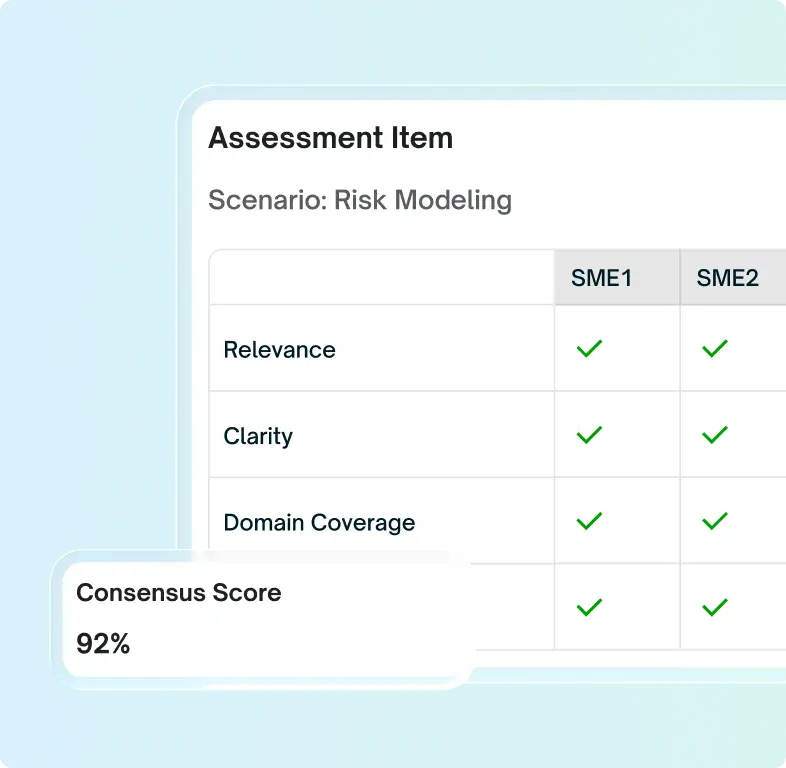

Expert-Led Content Development

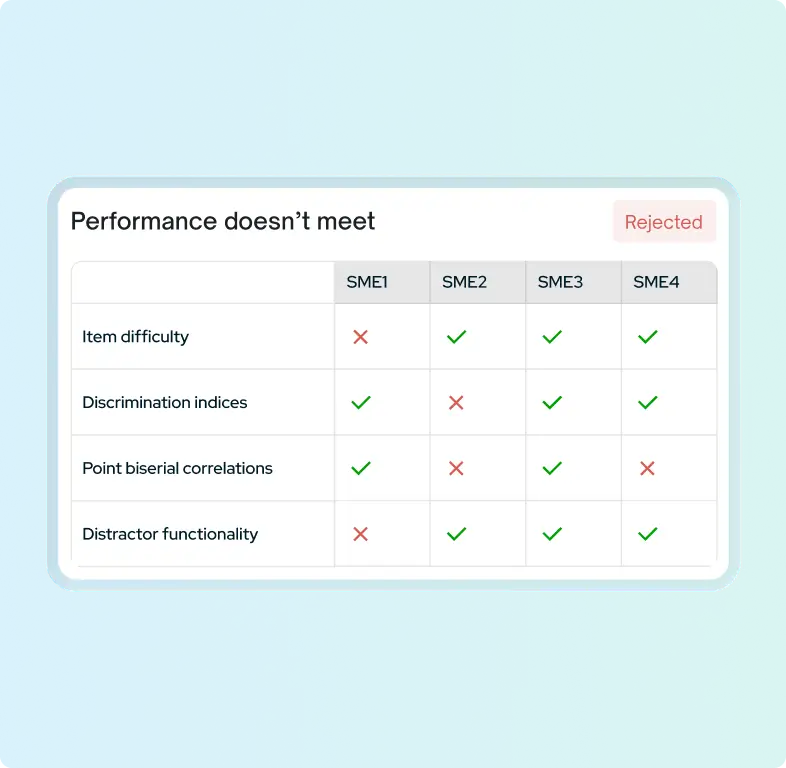

Assessments are developed by internal I/O psychologists and external subject matter experts. Each item undergoes:

- Qualitative review (relevance, clarity, domain coverage)

- Quantitative expert agreement validation

- Iterative refinement

- Tryout with representative populations

If expert consensus is not reached, the item is rejected. More than 1,000 external experts contribute to content validation.

Reliability You Can Measure

Reliability refers to consistency in measurement. Glider assessments demonstrate:

- Strong test–retest reliability (Spearman-Brown coefficients ~.82 in sample data)

- High internal consistency (Cronbach’s alpha ~.80)

We conduct continuous item analysis evaluating

- Item difficulty

- Discrimination indices

- Point biserial correlations

- Distractor functionality

Items that do not meet performance standards are revised or removed.

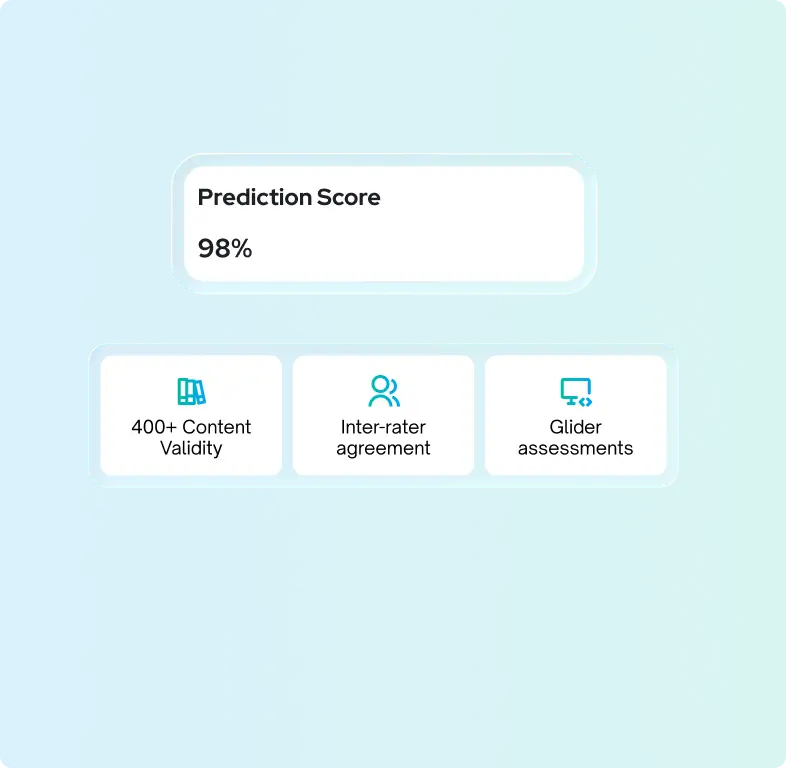

Validity That Predicts Performance

Validity measures whether an assessment measures what it claims to measure. Glider demonstrates:

- Content validity across 400+ technical and domain skills

- Inter-rater agreement averaging approximately 77%

- Predictive validity showing candidates who clear Glider assessments are 3.6x more likely to be successfully placed

Assessment outcomes correlate with real-world performance.

Evaluating capability, behavior, motivation, and integrity.

Can they do the job?

Technical and domain skill assessments

Simulated Web IDE environments

Will they do the job?

Behavioral and psychometric assessments

Do they want the job?

Motivational alignment and engagement indicators

Can they succeed in this job?

Structured, competency-based interviews

Where Science Meets Real-World Application

Assessments: Real-World Skills Evaluation

- 400+ technical and domain skills

- 30+ interactive question types

- Simulated environments

- Automated, objective scoring

400+ technical and domain skills

30+ interactive question types

Simulated

environments

Automated, objective scoring

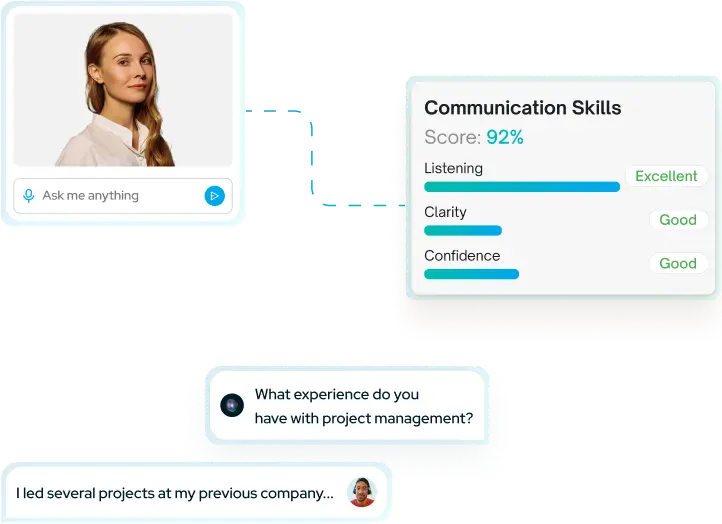

Interviews: Consistency at Speed and Scale

Unstructured interviews weaken predictive accuracy. Glider standardizes interviews through competency-based frameworks and structured scoring.

Standardized

evaluation rubrics

AI-supported analysis with human oversight

Fast, fair, and consistent candidate engagement

L&D: From Hiring to Skills Mastery

Skills are not static. Performance evolves. Glider extends its validated competency framework beyond hiring into practice-based learning and ongoing skill calibration.

Team-level skill diagnostics

Skill gap analysis across teams and individuals

Self-paced, custom, practice-based learning

Mastery tracking over time

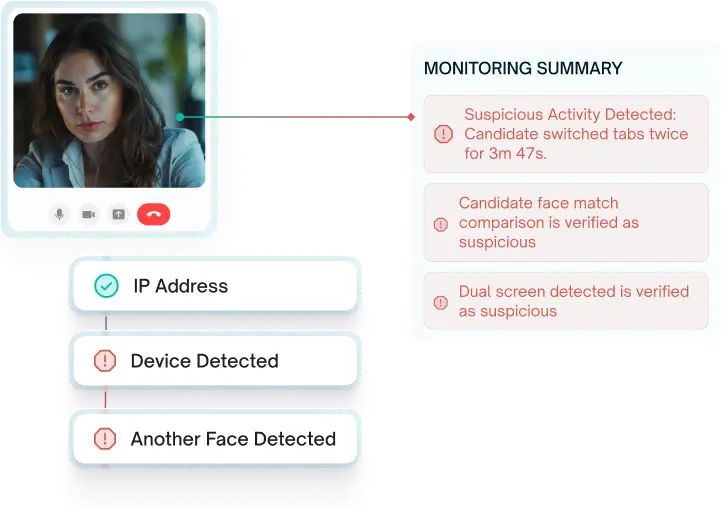

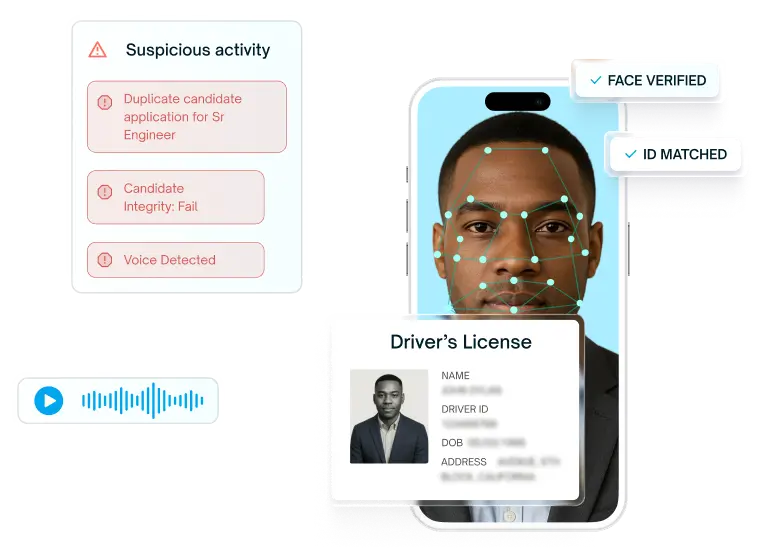

AI Proctoring & ID Verification: Trust the Results

Performance data must be authentic to be defensible. Glider integrates advanced integrity safeguards to protect the validity of every candidate evaluation.

Audio and video monitoring

Tab-switch detection

Plagiarism detection

Multiple face detection

AI enabled ID verification

Reliable. Valid. Defensible.

Glider AI delivers scientifically grounded, performance-aligned talent evaluation designed for enterprise hiring and workforce development.